Welcome to the VQA Challenge 2021!

Deadline: Friday, May 7, 2021 23:59:59 GMT

Countdown:

Overview Challenge Guidelines Leaderboard

Papers reporting results on the VQA v2.0 dataset should --

1) Report test-standard accuracies, which can be calculated using

either of the non-test-dev phases, i.e., "Test-Standard"

or "Test-Challenge".

2) Compare their test-standard accuracies with those on the Test-Standard 2020

leaderboard, Test-Standard 2019

leaderboard, test2018

leaderboard and test2017

leaderboard.

Note: We have fixed a minor bug in the evaluation script that resulted in inconsistent processing of ground-truth and predicted answers for some cases (please see this commit) for the exact change in the script). Therefore, the new evaluation server might give slightly different accuracy results (difference of ~0.1%) as compared to the old evaluation server.

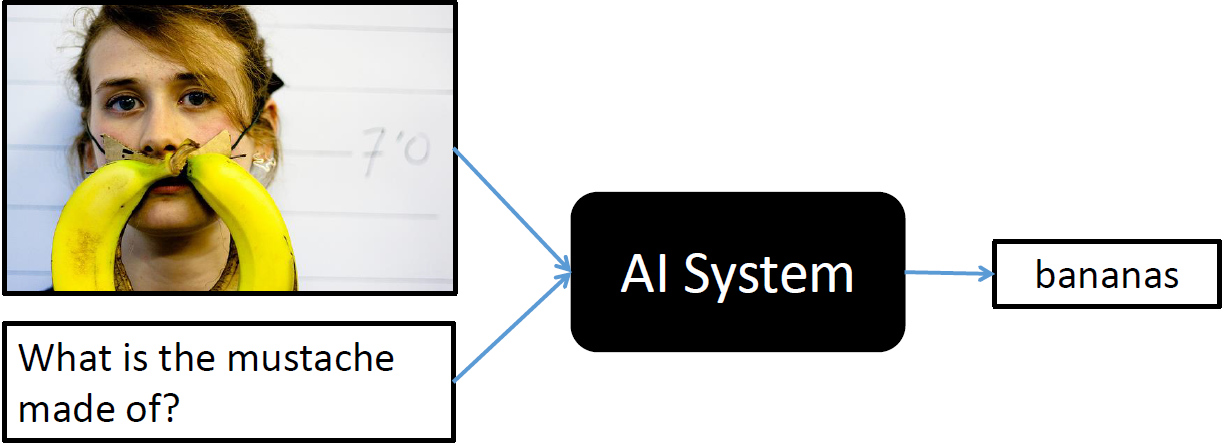

Overview

We are pleased to announce the Visual Question Answering (VQA) Challenge 2021. Given an image and a natural language question about the image, the task is to provide an accurate natural language answer.

The challenge is hosted on EvalAI. Challenge link: https://evalai.cloudcv.org/web/challenges/challenge-page/830/overview

The VQA v2.0 train, validation and test sets, containing more than 250K images and 1.1M questions, are available on the download page. All questions are annotated with 10 concise, open-ended answers each. Annotations on the training and validation sets are publicly available.

VQA Challenge 2021 is the sixth edition of the VQA Challenge. Previous five versions of the VQA Challenge were organized in past five years, and the results were announced at VQA Challenge Workshop in CVPR 2020, CVPR 2019, CVPR 2018, CVPR 2017 and CVPR 2016. More details about past challenges can be found here: VQA Challenge 2020, VQA Challenge 2019, VQA Challenge 2018, VQA Challenge 2017 and VQA Challenge 2016.

Answers to some common questions about the challenge can be found in the FAQ section.

Dates

Mar 7, 2021 VQA Challenge 2021 launch

May 7, 2021 Submission deadline at 23:59:59 UTC

Jun 19, 2021 Winners' announcement at the VQA Workshop, CVPR 2021

After the challenge deadline, all challenge participant results on test-standard split will be made public on a test-standard leaderboard.

Challenge Guidelines

Following COCO, we have divided the test set for VQA v2.0 into a number of splits, including test-dev, test-standard, test-challenge, and test-reserve, to limit overfitting while giving researchers more flexibility to test their system. Test-dev is used for debugging and validation experiments and allows for maximum 10 submissions per day (according to UTC timezone). Test-standard is the default test data for the VQA competition. When comparing to the state of the art (e.g., in papers), results should be reported on test-standard. Test-standard is also used to maintain a public leaderboard that is updated upon submission. Test-reserve is used to protect against possible overfitting. If there are substantial differences between a method's scores on test-standard and test-reserve, this will raise a red-flag and prompt further investigation. Results on test-reserve will not be publicly revealed. Finally, test-challenge is used to determine the winners of the challenge.

The evaluation page lists detailed information regarding how submissions will be scored. The evaluation servers are open. Following last few years, we are hosting the evaluation servers on EvalAI, developed by the CloudCV team. EvalAI is an open-source web platform designed for organizing and participating in challenges to push the state of the art on AI tasks. We encourage people to first submit to "Test-Dev" phase to make sure that you understand the submission procedure, as it is identical to the full test set submission procedure. Note that the "Test-Dev" and "Test-Challenge" evaluation servers do not have public leaderboards.

To enter the competition, first you need to create an account on EvalAI.

We allow people to enter

our challenge either privately or publicly. Any submissions to the "Test-Challenge" phase will be

considered to be participating in the challenge. For submissions to the "Test-Standard" phase, only ones that

were submitted before the challenge deadline and posted to the public leaderboard will be considered to be

participating in the challenge.

Before uploading your results to EvalAI, you will need to create a JSON file containing your results

in the correct format as described on the evaluation page.

To submit your JSON file to the VQA evaluation servers, click on the “Submit” tab on the VQA Challenge 2021 page on EvalAI. Select the phase ("Test-Dev" or "Test-Standard" or "Test-Challenge "). Please select the JSON file to upload and fill in the required fields such as "method name" and "method description" and click “Submit”. After the file is uploaded, the evaluation server will begin processing. To view the status of your submission please go to “My Submissions” tab and choose the phase to which the results file was uploaded. Please be patient, the evaluation may take quite some time to complete (~4 min). If the status of your submission is “Failed” please check your "Stderr File" for the corresponding submission.

After evaluation is complete and the server shows a status of “Finished”, you will have the option to download your evaluation results by selecting “Result File” for the corresponding submission. The "Result File" will contain the aggregated accuracy on the corresponding test-split (test-dev split for "Test-Dev" phase, test-standard and test-dev splits for both "Test-Standard" and "Test-Challenge" phases). If you want your submission to appear on the public leaderboard, please submit to "Test-Standard" phase and check the box under "Show on Leaderboard" for the corresponding submission.

Please limit the number of entries to the challenge evaluation server to a reasonable number, e.g., one entry per paper. To avoid overfitting, the number of submissions per user is limited to 1 upload per day (according to UTC timezone) and a maximum of 5 submissions per user. It is not acceptable to create multiple accounts for a single project to circumvent this limit. The exception to this is if a group publishes two papers describing unrelated methods, in this case both sets of results can be submitted for evaluation. However, Test-Dev allows for 10 submissions per day.

The download page contains links to all VQA v2.0 train/val/test images, questions, and associated annotations (for train/val only). Please specify any and all external data used for training in the "method description" when uploading results to the evaluation server.

Results must be submitted to the evaluation server by the challenge deadline. Competitors' algorithms will be evaluated according to the rules described on the evaluation page. Challenge participants with the most successful and innovative methods will be invited to present.

Tools and Instructions

We provide API support for the VQA annotations and evaluation code. To download the VQA API, please visit our GitHub repository. For an overview of how to use the API, please visit the download page and consult the section entitled VQA API. To obtain API support for COCO images, please visit the COCO download page. To obtain API support for abstract scenes, please visit the GitHub repository.

For challenge related questions, please contact visualqa@gmail.com. In case of technical questions related to EvalAI, please post on the VQA Challenge forum.

Frequently Asked Questions (FAQ)

As a reminder, any submissions before the challenge deadline whose results are made publicly visible on the "Test-Standard" leaderboard OR are submitted to the "Test-Challenge" phase will be enrolled in the challenge. For further clarity, we answer some common questions below:

- Q: What do I do if I want to make my test-standard results public and participate in the challenge? A: Making your results public (i.e., visible on the leaderboard) on the "Test-Standard" phase implies that you are participating in the challenge.

- Q: What do I do if I want to make my test-standard results public, but I do not want to participate in the challenge? A: We do not allow for this option.

- Q: What do I do if I want to participate in the challenge, but I do not want to make my test-standard results public yet? A: Submit to the "Test-Challenge" phase. This phase was created for this scenario.

- Q: When will I find out my test-challenge accuracies? A: We will reveal challenge results some time after the deadline. Results will first be announced at our Visual Question Answering Workshop at CVPR 2021.

- Q: Can I participate from more than one EvalAI team in the VQA challenge? A: No, you are allowed to participate from one team only.

- Q: Can I add other members to my EvalAI team? A: Yes.

- Q: Is the daily/overall submission limit for a user or for a team? A: It is for a team.