The VQA Challenge Winners and Honorable Mentions were announced at the VQA Challenge Workshop where they were awarded TitanX GPUs sponsored by NVIDIA!

The Codalab evaluation servers are still open to evalaute results for test-dev and test-standard splits.

In order to be consistent with Challenge test2015 phase (which closed exactly at 23:59:59 UTC, June 5), submissions to leaderboard for test2015 phase before 23:59:59 UTC, June 5 were only considered towards the challenge, for all four challenges.

The challenge deadline has been extended to June 5, 23:59:59 UTC.

Papers reporting results on the VQA dataset should --

1) Report test-standard accuracies, which can be calculated using either of the non-test-dev phases, i.e., "test2015" or "Challenge test2015" on the following links: [oe-real | oe-abstract | mc-real | mc-abstract].

2) Compare their test-standard accuracies with those on the corresponding test2015 leaderboards [oe-real-leaderboard | oe-abstract-leaderboard | mc-real-leaderboard | mc-abstract-leaderboard].

Overview

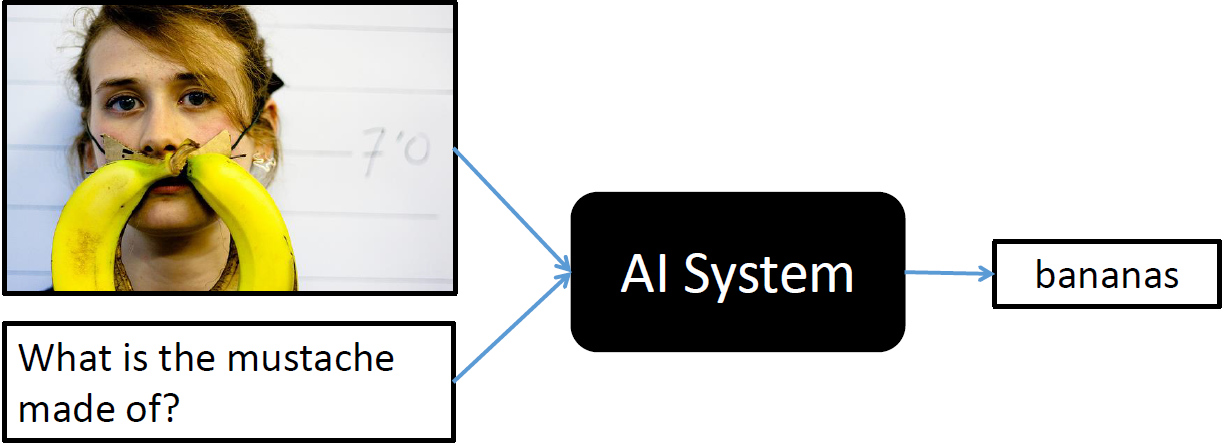

We are pleased to announce the Visual Question Answering (VQA) Challenge. Given an image and a natural language question about the image, the task is to provide an accurate natural language answer. Visual questions selectively target different areas of an image, including background details and underlying context. As a result, a system that succeeds at VQA typically needs a detailed understanding of the image and complex reasoning. Teams are encouraged to compete in one or more of the following four VQA challenges:

- Open-Ended for real images: Submission and Leaderboard

- Open-Ended for abstract scenes: Submission and Leaderboard

- Multiple-Choice for real images: Submission and Leaderboard

- Multiple-Choice for abstract scenes: Submission and Leaderboard

The VQA train, validation, and test sets, containing more than 250,000 images and 760,000 questions, are available on the download page. All questions are annotated with 10 concise, open-ended answers each. Annotations on the training and validation sets are publicly available.

Answers to some common questions about the challenge can be found in the FAQ section.

Dates

October 5, 2015 Version 1.0 of train/val/test data and evaluation software released

June 5, 2016 Extended Submission deadline at 23:59:59 UTC

After the challenge deadline, all challenge participant results on test-standard will be made public on a test-standard leaderboard.

Organizers

- Aishwarya Agrawal (Virginia Tech)

- Stanislaw Antol (Virginia Tech)

- Larry Zitnick (Facebook AI Research)

- Dhruv Batra (Virginia Tech)

- Devi Parikh (Virginia Tech)

Challenge Guidelines

Following MSCOCO, we have divided the test set for real images into a number of splits, including test-dev (same images as in MSCOCO test-dev split), test-standard, test-challenge, and test-reserve (other three splits are roughly same size as that of MSCOCO each, but the images might be different), to limit overfitting while giving researchers more flexibility to test their system. Test-dev is used for debugging and validation experiments and allows for maximum 10 submissions per day. Test-standard is the default test data for the VQA competition. When comparing to the state of the art (e.g., in papers), results should be reported on test-standard. Test-standard is also used to maintain a public leaderboard that is updated upon submission. Test-reserve is used to protect against possible overfitting. If there are substantial differences between a method's scores on test-standard and test-reserve, this will raise a red-flag and prompt further investigation. Results on test-reserve will not be publicly revealed. Finally, test-challenge is used to determine the winners of the challenge. For abstract scenes, there is only one test set which is available for download on the download page.

The evaluation page lists detailed information regarding how submissions will be scored. The evaluation servers are open. We encourage people to first submit to test-dev for either open-ended and multiple-choice tasks for real images to make sure that you understand the submission procedure, as it is identical to the full test set submission procedure. Note that the test-dev and "Challenge" evaluation servers do not have a public leaderboards (if you try to make your results public, your entry will be filled with zeros).

To enter the competition, first you need to create an account on CodaLab. From your account you will be able to participate in all VQA challenges. We allow people to enter our challenge either privately or publicly. Any submissions to the phase marked with "Challenge" will be considered to be participating in the challenge. For submissions to the non-"Challenge" phase, only ones that were submitted before the challenge deadline and posted to the public leaderboard will be considered to be participating in the challenge.

Before uploading your results to the evaluation server, you will need to create a JSON file containing your results in the correct format as described on the evaluation page. The file should be named "vqa_[task_type]_[dataset]_[datasubset]_[alg_name]_results.json". Replace [task_type] with either "OpenEnded" or "MultipleChoice" depending on the challenge you are participating in, [dataset] with either "mscoco" or "abstract_v002" depending on whether you are participating in the challenge for real images or abstract scenes, [datasubset] with either "test-dev2015" or "test2015" depending on the test split you are using, and [alg] with your algorithm name. Place the JSON file into a zip file named "results.zip".

To submit your zipped result file to the VQA Challenge click on the “Participate” tab on the appropriate CodaLab evaluation server [ oe-real | oe-abstract | mc-real | mc-abstract]. Select the challenge type (open-ended for real or open-ended for abstract or multiple-choice for real or multiple-choice for abstract) and test split (test-dev or test). When you select “Submit / View Results” you will be given the option to submit new results. Please fill in the required fields such as method description and click “Submit”. A pop-up will prompt you to select the results zip file for upload. After the file is uploaded the evaluation server will begin processing. To view the status of your submission please select “Refresh Status”. Please be patient, the evaluation may take quite some time to complete (~2min on test-dev and ~10min on the full test set). If the status of your submission is “Failed” please check your file is named correctly and has the right format.

After evaluation is complete and the server shows a status of “Finished”, you will have the option to download your evaluation results by selecting “Download evaluation output from scoring step.” The zip file will contain three files:

vqa_[task_type]_[dataset]_[datasubset]_[alg_name]_accuracy.json aggregated evaluation on test

metadata automatically generated (safe to ignore)

scores.txt automatically generated (safe to ignore)

Please limit the number of entries to the challenge evaluation server to a reasonable number, e.g., one entry per paper. To avoid overfitting, the number of submissions per user is limited to 1 upload per day and a maximum of 5 submissions per user. It is not acceptable to create multiple accounts for a single project to circumvent this limit. The exception to this is if a group publishes two papers describing unrelated methods, in this case both sets of results can be submitted for evaluation. However, test-dev allows for 10 submissions per day. Please refer to the section on "Test-Dev Best Practices" in the MSCOCO detection challenge page for more information about the test-dev set.

The download page contains links to all VQA train/val/test images, questions, and associated annotations (for train/val only). Please specify any and all external data used for training in the "method description" when uploading results to the evaluation server.

Results must be submitted to the evaluation server by the challenge deadline. Competitors' algorithms will be evaluated according to the rules described on the evaluation page. Challenge participants with the most successful and innovative methods will be invited to present.

Tools and Instructions

We provide API support for the VQA annotations and evaluation code. To download the VQA API, please visit our GitHub repository. For an overview of how to use the API, please visit the download page and consult the section entitled VQA API. To obtain API support for MSCOCO images, please visit the MSCOCO download page. To obtain API support for abstract scenes, please visit the GitHub repository.

For additional questions, please contact visualqa@gmail.com.

Frequently Asked Questions (FAQ)

As a reminder, any submissions before the challenge deadline whose results are made publicly visible on the test-standard leaderboard or are submitted to the "Challange" phase will be enrolled in the challenge. For further clarity, we answer some common questions below:

- Q: What do I do if I want to make my test-standard results public and participate in the challenge? A: Making your results public (i.e., visible on the leaderboard) on the CodaLab phase that does not have "Challenge" in the name implies that you are participating in the challenge.

- Q: What do I do if I want to make my test-standard results public, but I do not want to participate in the challenge? A: We do not allow for this option.

- Q: What do I do if I want to participate in the challenge, but I do not want to make my test-standard results public yet? A: Submitting to the CodaLab phase explicitly marked with "Challenge" in the name was created for this scenario.

- Q: When will I find out my test-challenge accuracies? A: We will reveal challenge results some time after the deadline. Results will first be announced at our CVPR VQA Challenge workshop.

- Q: I'm getting an error during my submission that says something like, "Traceback (most recent call last): File "/codalabtemp/tmpa4H1nE/run/program/evaluate.py", line 96, in resFile = glob.glob(resFileFmt)[0] IndexError: list index out of range", how do I fix this? A: This typically happens when the results filename (i.e., the JSON filename) does not match the template, "vqa_[task_type]_[dataset]_[datasubset]_[alg_name]_results.json", as described above, so please try to rename it according to the template.